- simple.ai by @dharmesh

- Posts

- Prompts, Skills and Plugins: Understand The Hierarchy of an LLM Harness

Prompts, Skills and Plugins: Understand The Hierarchy of an LLM Harness

Explaining every layer of an LLM harness (the simple way)

Do you know the difference between skills and plugins? How about MCP and API?

If you've been using AI for any length of time, you've probably heard a sea of terms floating around: skills, tools, MCP servers, APIs, CLIs…

And yet, most AI users I’ve talked to, while early adopters in their own right, will go a long, long time before dipping their toes into the deeper waters where all the magic happens.

It all starts with defining the harness.

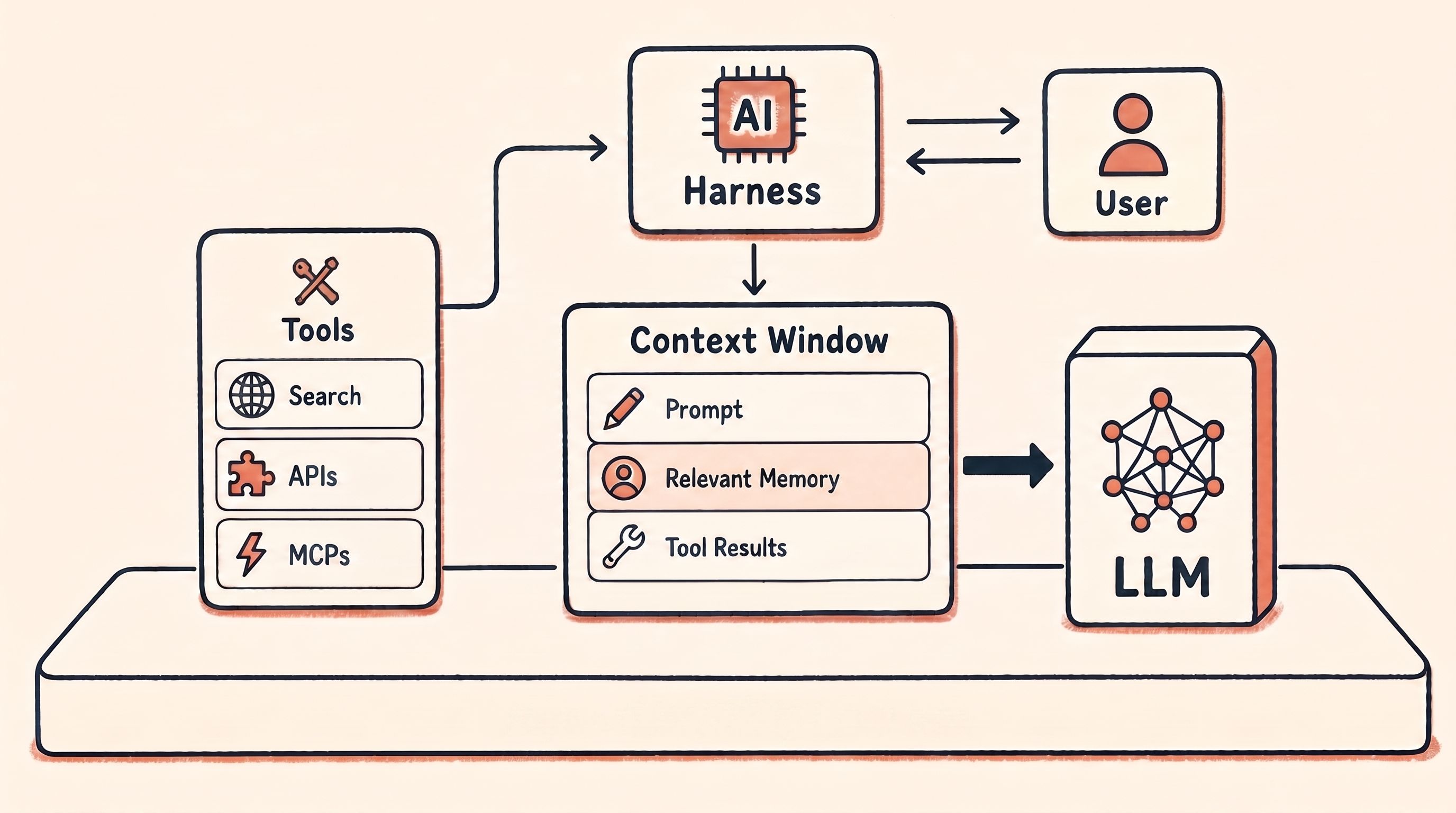

These days, when AI feels limited to you, the large language model (LLM) is usually fine, but it’s the harness that needs more attention. Think of the LLM as the CPU in a computer. It ships from the factory with a set of instructions that it can execute. But the CPU alone can’t do much. It can’t store files. It can’t show you things on a screen. It can’t connect to the Internet. For all of those things, you need an operating system (like Mac OS or Windows). Same with an LLM. On its own, it is constrained to whatever “shipped” from the factory (based on its training data).

An LLM by itself is just a text-completion engine. It doesn't know who you are, can't remember between chats, can't access your tools, can't take actions, can't run software.

The harness is everything that wraps around the model and fills in those gaps. It isn't one thing, though -- it's a stack of layers, each adding its own capabilities.

So today, I want to map out the harness one layer at a time:

How to capture quick wins with personalization

What it means to take your AI even further (with MCPs and APIs)

Find out which layer you’re on today (and how to climb higher)

Personalize Your Harness: Quick Wins

Picture the harness as a ladder. Not a journey to climb to the top, and not a difficulty curve where each rung is harder than the last. It's just a way to see AI's layered capabilities so you can spot which one your work actually needs next.

The first few rungs are where a tiny amount of setup effort can quickly compound into a much higher-quality AI experience.

The way I think about the first few rungs: they're about giving the AI more of you.

More context, more process, more reach. The model doesn't get smarter. Its IQ doesn’t go up, but its EQ does, because it can better understand you and meet your needs.

A prompt is what you type into the chat. Better prompts help, but the bigger shift over the last couple of years has been from optimizing how you ask to optimizing what the AI has access to when it thinks.

Everyone knows prompts. But here’s how to start taking them further:

Custom instructions are the reusable context you don’t need to keep retyping. Every new conversation with ChatGPT is essentially a blank slate. Outside of memory, project files, or other saved context, it doesn't automatically know who you are, what you do, or how you prefer to communicate.

Custom Instructions solve that by letting you fill in the blanks. Your answers get added to the context the AI can use when responding, so the conversation can reset, but some of your baseline context stays. Yay, efficiency!

That brings us to skills.

A skill is a reusable playbook. A skill teaches the AI, most commonly Claude, how a person, team, or company does a specific kind of work through instructions, metadata, scripts, templates, examples, and more. A process you keep re-explaining becomes something the AI can just follow, like an SOP (Standard Operating Procedure) in the workplace.

The exact boundary of "skill" varies by platform, so it's not a universal standard. In ChatGPT, similar functionality can show up through Projects, Custom GPTs, memory, files, and instructions. And here, "skill" doesn't mean a human career skill -- it means a reusable process for the AI.

A plugin You may have used plugins in the software in the pre-AI world. Like in Photoshop. Or even Minecraft. It’s a way generally to extend a system. In the AI world, a plugin is a modular software component that enables AI agents to connect with external systems, applications, and data sources. While tools are individual functions an AI can call and skills are instructions that teach the AI how to perform specific tasks, plugins bundle multiple capabilities together into a packaged extension that expands what an AI system can do.

Climb those four rungs -- prompts, custom instructions, skills, and plugins -- and you move from a barebones chat window to an AI system that knows who you are, understands how you work, and can reach the apps where your stuff lives.

That's a lot of leverage added to an otherwise barebones chat window.

Below, we’ll get into how we can extend those capabilities even further.

Extend Your Harness: Power User Playbook

The first four rungs gave the AI more of you. The next three give it more agency.

Instead of just answering questions, the AI starts actually doing things.

Doing things can sometimes require tools.

A tool is a single action the AI can take. Web search. A stock price lookup. A weather forecast retrieval. If a plugin is a toolbox, tools are what's inside it -- one verb, one job each. The Gmail plugin, for instance, contains tools like "send email," "search inbox," and "archive email."

Here's a quirky detail: when the LLM needs to use a tool, it doesn’t really directly use the tool (because once again, it’s kind of frozen in time). Instead, the harness does something super-clever. When passing a prompt to the LLM, the harness also describes a set of tools that the LLM can pretend it has access to. Like “web_search”. Now, if the LLM needs to use the web_search tool, it puts that request in the context window output that goes back to the harness. Something like: “Please invoke the web_search tool and do a search on Thai restaurants in Boston”. The harness then actually executes the web_search tool, gets the results, puts them back in the context window with something like: “Here are the results of the web_search for Thai restaurants in Boston…[results here]” and then passes that context window back to the LLM. Now, the LLM has the results of the web_search and can go about its merry business.

I know this sounds a bit convoluted, but all tools work this way, and it dramatically expands the capabilities of an otherwise static/fixed LLM.

So, a short summary of what happens: When the harness invokes the LLM, it sends it a list of available tools. If the LLM wants to use one or more of those tools, it “tells” the harness which tools it wants to use. The harness uses those tools on behalf of the LLM and then passes the results back.

It's a clever way around the fact that LLMs only know what they were trained on and what's in their context window.

Plugins are collections of skills, tools, and resources for accomplishing a specific set of tasks.

Every rung above the prompt is just a way to put the right thing in the context window at the right time.

So if plugins are so great, why isn't every AI app loaded with them?

The problem is that, traditionally, every AI app needed its own version of each plugin. The Gmail plugin built for Claude wouldn't automatically work in ChatGPT. Someone had to build it twice. That kept the plugin universe small.

MCP (the Model Context Protocol) is the fix. Think of it like USB-C. Before USB-C, every kind of gadget had its own kind of cable. Now one standard works across phones, laptops, headphones, and almost everything.

MCP does the same thing for AI: build a tool server once to the MCP standard, and any MCP-compatible AI can use it.

That's why the list of MCP-compatible systems is growing fast -- HubSpot, Slack, your files, your calendar, Gmail, Agent.ai. With one natural-language request, an AI can reach across several of them in a single flow.

Underneath every MCP server sits the layer that makes them all possible: APIs — and sometimes CLIs.

An API (Application Programming Interface) is how one piece of software talks to another.

A CLI (Command Line Interface) is how you (or an AI agent) run software with typed commands instead of clicking around.

Together with MCP, they form what I collectively think of as AUX -- the agentic user experience. This is the rung where AI stops being a thing you talk to and becomes an agent that does work for you.

Where To Aim Your Attention Next

If you’ve made it this far, you’re already ahead of most AI users.

Like I said at the start of this post: the ladder isn't jargon to memorize. It's a map of what's possible, so you can pick the right next move in your AI education.

When you’re ready to improve your workflow, here's the rung to climb:

Want sharper answers → add prompt clarity.

Want the AI to know who you are → set up custom instructions.

Want it to do your work your way, repeatably → create a skill.

Want it talking to another app you already use → use MCP or connectors.

Want it taking actions, not just answering → use tools.

Want an agent to run software end-to-end → optimize for agentic user experience: APIs, MCP servers, and CLIs.

You don't have to climb every rung. A lot of useful AI work today happens in the first three or four. Knowing the whole ladder is what lets you keep going once you outgrow the rung you're on.

The people getting the most out of AI right now aren't doing it because they have a secret prompt. They've just climbed a rung or two above the prompt level.

The difference between someone who uses AI and someone who builds with it is mostly which rung they decided to keep going past.

—Dharmesh (@dharmesh)

What'd you think of today's email?Click below to let me know. |